What is a Durable Workflow?

A durable workflow is a long‑running, stateful business process that automatically remembers where it left off, survives crashes or restarts, and can resume or retry instead of starting over.

Unlike a simple script that runs once and dies, a durable workflow:

- Persists its state (current step, variables, context) in a database or durable store

- Handles timeouts, retries, and failures without losing progress

- Supports human‑in‑the‑loop steps, approvals, and external events (e.g., a user clicking “confirm”)

In effect, durable workflows bring human‑like resilience to automation: they pause, recover, and continue, just like a person who remembers what they’ve already done.

Why Durable Workflows Are Important

Resilience to Failures

If a service crashes, a network call times out, or a task fails, the workflow engine persists state and resumes from the last checkpoint instead of dropping everything. This is crucial for production‑grade systems where “starting over” is not acceptable.

Long‑Running Business Processes

Many real‑world flows—checkout, onboarding, approvals, batch jobs, or AI‑agent pipelines—take minutes, hours, or even days. Durable workflows manage timeouts, retries, and waiting for external events without requiring manual state management.

Cleaner, More Maintainable Code

Instead of sprinkling retry logic, state‑saving, and rollback code everywhere, developers write imperative, readable workflows. The platform handles the reliability “plumbing” under the hood.

Observability and Debugging

Because every step is checkpointed, you get built‑in dashboards, logs, and traces for each workflow instance. This makes it much easier to debug, audit, and optimize complex flows.

AI Agents and Microservice Orchestration

Durable workflows are increasingly used to coordinate LLM calls, agents, and microservices, ensuring that multi‑step AI pipelines don’t lose progress when a call fails or times out.

Major Durable‑Workflow Platforms Today

Here are some of the leading durable‑workflow platforms shaping modern architectures:

- Temporal – Open‑source, code‑first workflow engine that lets you write workflows as plain code (Go, Java, Python, TypeScript). It provides automatic retries, timeouts, compensation logic, and strong support for microservices and AI‑agent orchestration.

- Azure Durable Functions – Microsoft’s serverless durable‑workflow offering on Azure. It’s ideal for event‑driven, long‑running serverless flows tightly integrated with the Azure ecosystem.

- AWS Step Functions – Amazon’s visual, JSON‑based state‑machine workflows. It’s widely used for AWS‑native apps and offers a visual editor for modeling and debugging complex flows.

- Camunda Platform 8 – BPMN‑based workflow and decision automation platform. It’s popular in business‑process‑heavy and compliance‑sensitive environments, with strong support for human tasks and complex routing.

- Inngest – Event‑driven durable‑workflow platform focused on background jobs and AI workflows. It’s lightweight, developer‑friendly, and designed for modern, event‑driven architectures.

- Hatchet – Open‑source durable task queue and workflow engine that runs on Postgres. It’s well‑suited for AI agents and background tasks where durability and distribution matter.

- Render Workflows – Durable workflows tailored for AI and LLM workloads, with automatic retries, distributed execution, and strong durability guarantees.

- DBOS / Postgres‑based workflows – Platforms that build durable workflows directly on top of Postgres, using the database as the single source of truth for state and checkpoints.

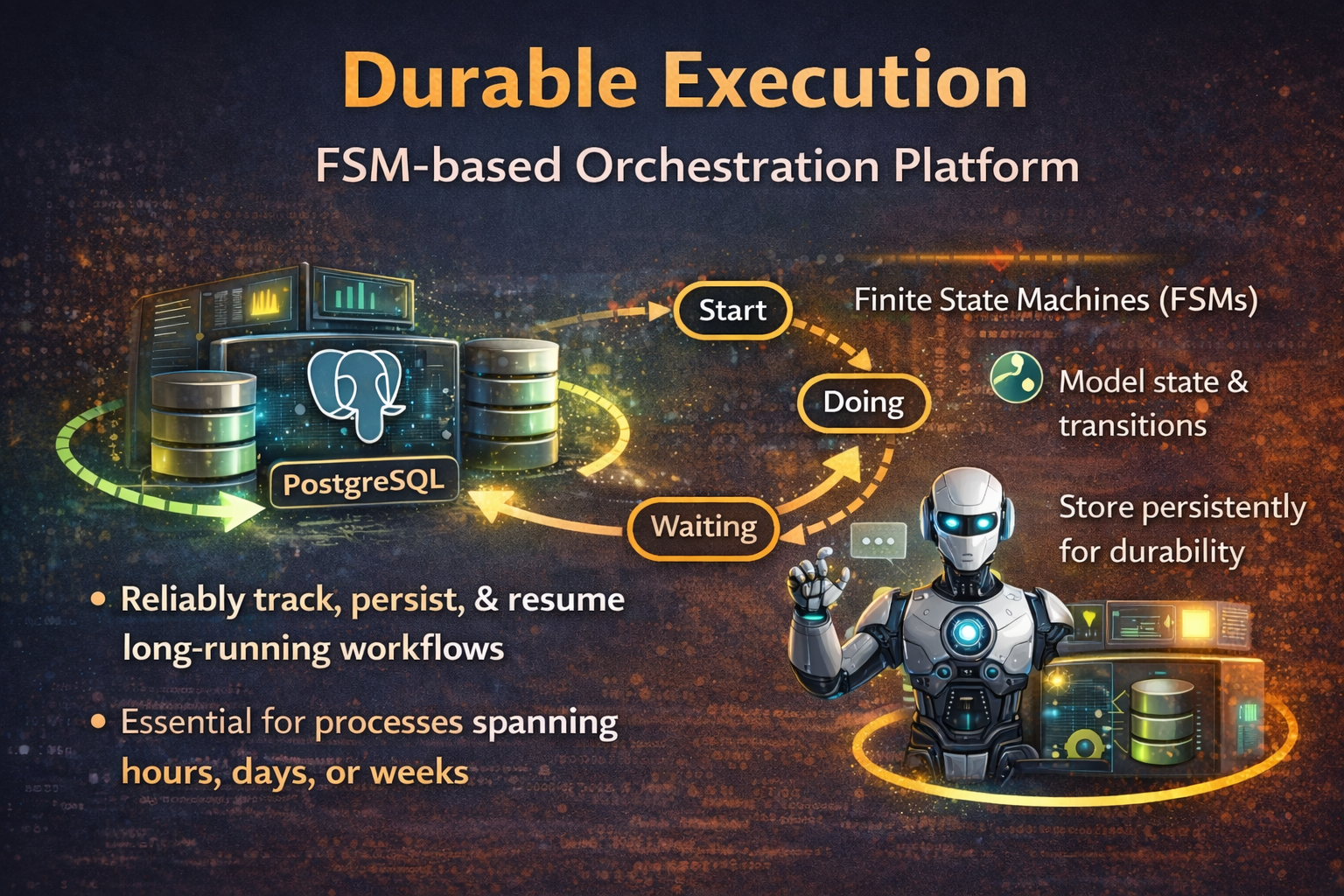

How Durable Execution is Related to FSM

Finite State Machines (FSMs) are a natural fit for durable workflows because they provide a clear, predictable way to model long‑running processes, handle interruptions, and persist state across restarts.

How FSMs Help Durable Workflows

1. Explicit States and Transitions

- An FSM defines a finite set of states (e.g.,

Pending,Processing,Failed,Completed) and allowed transitions between them (e.g.,Pending → Processingonly when a payment succeeds). - This makes workflows deterministic and auditable: you always know what state a workflow instance is in and how it got there.

2. Persistence and Resumability

- For durable workflows, the current FSM state (plus context like variables or timers) is saved to a database or durable store.

- When a service restarts or a task fails, the engine reloads the state and continues from the last valid state instead of starting over—just like a human who remembers “I was at step 3.”

3. Error Handling and Rollback

- FSMs can encode failure states (

Failed,Retry,Compensating) and define how to move back or forward when things go wrong. - This supports patterns like retries, timeouts, and compensation logic (e.g., “undo” a payment if shipping fails).

4. Concurrency and Safety

- Because each workflow instance is in exactly one state at a time, FSMs help avoid race conditions and ensure that only valid transitions happen, even when multiple workers or services are involved.

How FSMs Are Implemented in Durable Workflows Today

Modern durable‑workflow engines either use an explicit FSM model (like AWS Step Functions) or implement an internal FSM‑like structure (like Temporal).

1. Explicit FSM‑Style Engines

- AWS Step Functions: You define workflows as state‑machine JSON (States, Transitions, Choices, Wait, Fail, Succeed). The engine interprets this JSON as an FSM: each state corresponds to a node, and transitions are driven by events or conditions.

- xstate / state‑machine libraries (web, Node, etc.): Libraries like xstate let you define hierarchical FSMs in JavaScript/TypeScript and persist the current state to storage or a backend. These are often used to drive UI‑driven or microservice‑driven workflows that must survive page refreshes or service restarts.

2. Code‑First Durable Engines (Implicit FSM)

- Temporal: Workflows are written as regular code (Go, Java, Python, TypeScript), but the engine effectively treats each workflow as an FSM under the hood. Every time the workflow hits a blocking call (e.g., a timer or activity), Temporal checkpoint‑saves the current “state” (call stack, variables) so it can replay and resume after failures.

- Azure Durable Functions / similar: Orchestrator functions maintain a logical state machine via control‑flow (if/else, loops, awaits) and checkpoints. The runtime persists execution history and replays it to reconstruct the current state after restarts.

This approach allows you to build robust, fault-tolerant workflow engines where business logic is modeled as state transitions, and the system can always recover and continue processing from the last known state.

In the upcoming blog, we will discover Finite State Machines Backed by PostgreSQL.